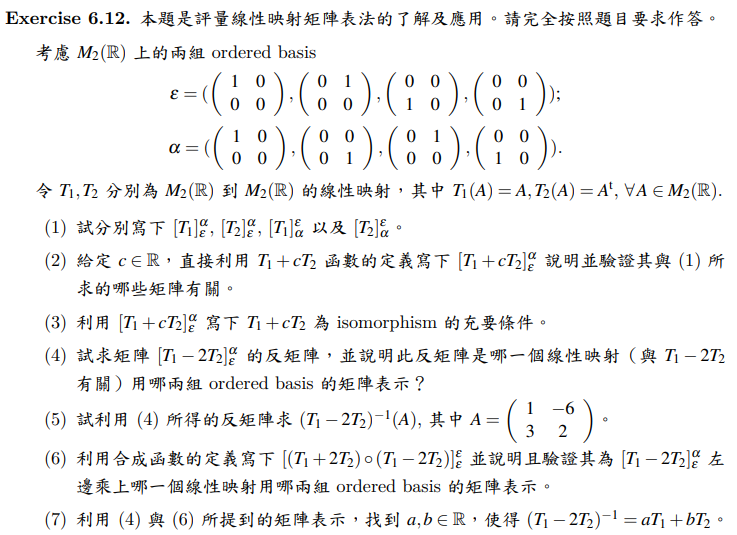

討論區

(2) 參考答案

第二小題可直接利用線性映射的定義,

將 \(\varepsilon=(E_{11},E_{12},E_{21},E_{22})\) 的基底向量逐一代入

\[

T_1+cT_2.

\]

因為

\[

T_1(A)=A,\qquad T_2(A)=A^t,

\]

所以

\[

(T_1+cT_2)(A)=A+cA^t.

\]

現在對 \(\varepsilon\) 的各基底向量計算:

\[

(T_1+cT_2)(E_{11})=E_{11}+cE_{11}=(1+c)E_{11}.

\]

寫成 \(\alpha\)-座標為

\[

[(T_1+cT_2)(E_{11})]_{\alpha}

=

\begin{pmatrix}

1+c\\0\\0\\0

\end{pmatrix}.

\]

\[

(T_1+cT_2)(E_{12})=E_{12}+cE_{21}.

\]

由於

\[

\alpha=(E_{11},E_{22},E_{12},E_{21}),

\]

故其 \(\alpha\)-座標為

\[

[(T_1+cT_2)(E_{12})]_{\alpha}

=

\begin{pmatrix}

0\\0\\1\\c

\end{pmatrix}.

\]

\[

(T_1+cT_2)(E_{21})=E_{21}+cE_{12}.

\]

故其 \(\alpha\)-座標為

\[

[(T_1+cT_2)(E_{21})]_{\alpha}

=

\begin{pmatrix}

0\\0\\c\\1

\end{pmatrix}.

\]

\[

(T_1+cT_2)(E_{22})=E_{22}+cE_{22}=(1+c)E_{22}.

\]

寫成 \(\alpha\)-座標為

\[

[(T_1+cT_2)(E_{22})]_{\alpha}

=

\begin{pmatrix}

0\\1+c\\0\\0

\end{pmatrix}.

\]

因此

\[

[T_1+cT_2]_{\varepsilon}^{\alpha}

=

\begin{pmatrix}

1+c&0&0&0\\

0&0&0&1+c\\

0&1&c&0\\

0&c&1&0

\end{pmatrix}.

\]

另一方面,

\[

[T_1]_{\varepsilon}^{\alpha}

=

\begin{pmatrix}

1&0&0&0\\

0&0&0&1\\

0&1&0&0\\

0&0&1&0

\end{pmatrix},

\qquad

[T_2]_{\varepsilon}^{\alpha}

=

\begin{pmatrix}

1&0&0&0\\

0&0&0&1\\

0&0&1&0\\

0&1&0&0

\end{pmatrix}.

\]

所以

\[

[T_1]_{\varepsilon}^{\alpha}+c[T_2]_{\varepsilon}^{\alpha}

=

\begin{pmatrix}

1&0&0&0\\

0&0&0&1\\

0&1&0&0\\

0&0&1&0

\end{pmatrix}

+

c

\begin{pmatrix}

1&0&0&0\\

0&0&0&1\\

0&0&1&0\\

0&1&0&0

\end{pmatrix}

\]

\[

=

\begin{pmatrix}

1+c&0&0&0\\

0&0&0&1+c\\

0&1&c&0\\

0&c&1&0

\end{pmatrix}.

\]

故

\[

[T_1+cT_2]_{\varepsilon}^{\alpha}

=

[T_1]_{\varepsilon}^{\alpha}+c[T_2]_{\varepsilon}^{\alpha}.

\]

(3) 參考答案

由(2)得

\[

[T_1+cT_2]_{\varepsilon}^{\alpha}

=

\begin{pmatrix}

1+c&0&0&0\\

0&0&0&1+c\\

0&1&c&0\\

0&c&1&0

\end{pmatrix}.

\]

此線性映射為 isomorphism

\[

[T_1+cT_2]_{\varepsilon}^{\alpha} \text{ 可逆}

\iff \det([T_1+cT_2]_{\varepsilon}^{\alpha})\neq 0.

\]

降階計算\(\det([T_1+cT_2]_{\varepsilon}^{\alpha})=(1+c)(1+c)(1+c)(1-c)\neq 0\)相當於

\[

1+c \neq 0,\qquad

1-c \neq 0.

\]

故充要條件為

\[

c\neq 1,\qquad c\neq -1.

\]

也就是

\[

T_1+cT_2 \text{ 為 isomorphism } \iff c\neq \pm 1.

\]

(4) 參考答案

取 c=-2,則

\[

[T_1-2T_2]_{\varepsilon}^{\alpha}

=

\begin{pmatrix}

-1&0&0&0\\

0&0&0&-1\\

0&1&-2&0\\

0&-2&1&0

\end{pmatrix}.

\]

設其反矩陣為

\[

([T_1-2T_2]_{\varepsilon}^{\alpha})^{-1}.

\]

由

\[

T_2^2=T_1

\]

可知

\[

(T_1-2T_2)(aT_1+bT_2)

=

(a-2b)T_1+(b-2a)T_2.

\]

要它等於 \(T_1\),故需滿足

\[

a-2b=1,\qquad b-2a=0.

\]

由第二式得

\[

b=2a.

\]

代回第一式:

\[

a-4a=1

\Rightarrow -3a=1

\Rightarrow a=-\frac13.

\]

因此

\[

b=-\frac23.

\]

所以

\[

(T_1-2T_2)^{-1}

=

-\frac13 T_1-\frac23 T_2.

\]

因此反矩陣就是此線性映射在

「定義域基底為 \(\alpha\),值域基底為 \(\varepsilon\)」下的矩陣表示,即

\[

([T_1-2T_2]_{\varepsilon}^{\alpha})^{-1}

=

[(T_1-2T_2)^{-1}]_{\alpha}^{\varepsilon}.

\]

接著算各個 \alpha 基底向量的像:

\[

(T_1-2T_2)^{-1}(E_{11})=-E_{11},

\qquad

(T_1-2T_2)^{-1}(E_{22})=-E_{22},

\]

\[

(T_1-2T_2)^{-1}(E_{12})

=

-\frac13E_{12}-\frac23E_{21},

\]

\[

(T_1-2T_2)^{-1}(E_{21})

=

-\frac23E_{12}-\frac13E_{21}.

\]

故

\[

([T_1-2T_2]_{\varepsilon}^{\alpha})^{-1}

=

\begin{pmatrix}

-1&0&0&0\\

0&0&-\frac13&-\frac23\\

0&0&-\frac23&-\frac13\\

0&-1&0&0

\end{pmatrix}.

\]

(1) 參考答案

由定義可得:

\[

T_1(E_{11})=E_{11},\quad

T_1(E_{12})=E_{12},\quad

T_1(E_{21})=E_{21},\quad

T_1(E_{22})=E_{22}.

\]

把這些向量寫成 \(\alpha\)-座標:

\[

[E_{11}]_{\alpha}=

\begin{pmatrix}1\\0\\0\\0\end{pmatrix},\quad

[E_{12}]_{\alpha}=

\begin{pmatrix}0\\0\\1\\0\end{pmatrix},\quad

[E_{21}]_{\alpha}=

\begin{pmatrix}0\\0\\0\\1\end{pmatrix},\quad

[E_{22}]_{\alpha}=

\begin{pmatrix}0\\1\\0\\0\end{pmatrix}.

\]

所以

\[

[T_1]_{\varepsilon}^{\alpha}

=

\begin{pmatrix}

1&0&0&0\\

0&0&0&1\\

0&1&0&0\\

0&0&1&0

\end{pmatrix}.

\]

再來,

\[

T_2(E_{11})=E_{11},\quad

T_2(E_{12})=E_{21},\quad

T_2(E_{21})=E_{12},\quad

T_2(E_{22})=E_{22}.

\]

寫成 \(\alpha\)-座標:

\[

[E_{11}]_{\alpha}=

\begin{pmatrix}1\\0\\0\\0\end{pmatrix},\quad

[E_{21}]_{\alpha}=

\begin{pmatrix}0\\0\\0\\1\end{pmatrix},\quad

[E_{12}]_{\alpha}=

\begin{pmatrix}0\\0\\1\\0\end{pmatrix},\quad

[E_{22}]_{\alpha}=

\begin{pmatrix}0\\1\\0\\0\end{pmatrix}.

\]

因此

\[

[T_2]_{\varepsilon}^{\alpha}

=

\begin{pmatrix}

1&0&0&0\\

0&0&0&1\\

0&0&1&0\\

0&1&0&0

\end{pmatrix}.

\]

\[

\varepsilon=(E_{11},E_{12},E_{21},E_{22}),\qquad

\alpha=(E_{11},E_{22},E_{12},E_{21}).

\]

由於

\[

T_1(A)=A,

\]

故

\[

T_1(E_{11})=E_{11},\quad

T_1(E_{22})=E_{22},\quad

T_1(E_{12})=E_{12},\quad

T_1(E_{21})=E_{21}.

\]

把它們寫成 \(\varepsilon\)-座標:

\[

[E_{11}]_{\varepsilon}=

\begin{pmatrix}

1\\0\\0\\0

\end{pmatrix},\quad

[E_{22}]_{\varepsilon}=

\begin{pmatrix}

0\\0\\0\\1

\end{pmatrix},\quad

[E_{12}]_{\varepsilon}=

\begin{pmatrix}

0\\1\\0\\0

\end{pmatrix},\quad

[E_{21}]_{\varepsilon}=

\begin{pmatrix}

0\\0\\1\\0

\end{pmatrix}.

\]

因此

\[

[T_1]_{\alpha}^{\varepsilon}

=

\begin{pmatrix}

1&0&0&0\\

0&0&1&0\\

0&0&0&1\\

0&1&0&0

\end{pmatrix}.

\]

又因為

\[

T_2(A)=A^t,

\]

故

\[

T_2(E_{11})=E_{11},\quad

T_2(E_{22})=E_{22},\quad

T_2(E_{12})=E_{21},\quad

T_2(E_{21})=E_{12}.

\]

把它們寫成 \(\varepsilon\)-座標:

\[

[E_{11}]_{\varepsilon}=

\begin{pmatrix}

1\\0\\0\\0

\end{pmatrix},\quad

[E_{22}]_{\varepsilon}=

\begin{pmatrix}

0\\0\\0\\1

\end{pmatrix},\quad

[E_{21}]_{\varepsilon}=

\begin{pmatrix}

0\\0\\1\\0

\end{pmatrix},\quad

[E_{12}]_{\varepsilon}=

\begin{pmatrix}

0\\1\\0\\0

\end{pmatrix}.

\]

因此

\[

[T_2]_{\alpha}^{\varepsilon}

=

\begin{pmatrix}

1&0&0&0\\

0&0&0&1\\

0&0&1&0\\

0&1&0&0

\end{pmatrix}.

\]